Practical Generative Engine Optimization: Use AI Search Data to Win More Visibility

A hands-on guide to using Superlines AI search data to understand your brand's position in AI-generated results, identify competitive gaps, and create content that ChatGPT, Gemini, Perplexity, and other AI engines actually cite.

Table of Contents

AI search engines don’t return ten blue links. ChatGPT, Gemini, Perplexity, Copilot, and Grok generate a synthesized paragraph in response to a question. That paragraph might mention your brand, link to your website, or ignore you entirely.

Generative Engine Optimization (GEO) is the practice of making sure AI engines mention you, cite you, and say good things when your topic comes up. This guide teaches you how to do it, step by step, using real AI search data from Superlines as the running example.

You will learn how to:

- Assess where your brand stands across AI platforms

- Find the specific gaps where competitors are winning and you are not

- Discover the hidden search queries that AI models use behind the scenes

- Turn every insight into a concrete content action

- Automate the ongoing optimization process with an AI agent

How AI search visibility works

Before optimizing, you need to understand what you are optimizing for. Superlines tracks five core metrics:

| Metric | What it measures | Why it matters | Strong benchmark |

|---|---|---|---|

| Brand Visibility (BV) | % of AI responses that mention your brand | Are AI engines aware of you? | >30% = Excellent |

| Citation Rate (CR) | % of AI responses that link to your website | Do AI engines trust you enough to cite? | >20% = Excellent |

| Sentiment | Positive, neutral, or negative language when you are mentioned | What are AI engines saying about you? | >75% positive |

| Average Position | How early in the response your brand appears | Are you the first recommendation or an afterthought? | 1.0–2.0 = Excellent |

| Branded Response Rate (BRR) | % of AI responses that mention any brand in your category | Is the query type competitively contested? | High BRR = competitive; Low BRR = first-mover opportunity |

A note on BRR: Branded Response Rate tells you something different from the other metrics — it measures the competitive density of a query, not your brand specifically. A prompt with a BRR of 80% means AI engines mention brands in 80% of responses to that query; it is a highly contested space where multiple competitors compete for mentions. A prompt with BRR of 15% is a relatively uncontested query where almost no brands are mentioned — meaning early, strong content can claim a nearly uncontested position. Use BRR to prioritize: low-BRR prompts with decent search volume are opportunities to build visibility in uncrowded territory before competitors discover them.

These metrics are fundamentally different from Google rankings. In traditional search, you optimize a page to rank for a keyword. In AI search, you optimize your entire brand presence so that AI models choose to include you when synthesizing an answer.

The Superlines platform sends hundreds of tracked prompts into AI engines and records every response. This gives you a map of where your brand is visible, where it is invisible, and what content you need to change that.

Step 1: Assess your brand’s current position

Goal: Get a clear picture of how visible your brand is across AI search, and identify immediate red flags.

Open your Superlines dashboard and look at the four overview cards across the top row: Brand Visibility, Citation Rate, Sentiment, and Brand Visibility By Platform. The fourth card breaks down your visibility across individual AI engines — this data feeds directly into Step 2. Below these cards, you’ll find trend charts for additional metrics including Average Position.

Here is what a real assessment looks like using Superlines’ own data:

Note: All example data in this guide represents a point-in-time snapshot. Your dashboard values will differ based on your brand, tracked prompts, and the current state of AI search.

| Metric | Current value | Trend | What it means |

|---|---|---|---|

| Brand Visibility | 4.0% | +2.3% ↑ | AI models are increasingly picking up Superlines content; strong positive momentum |

| Citation Rate | 8.9% | +3.9% ↑ | AI engines are linking to superlines.io in a meaningful and growing share of responses |

| Sentiment | 30% mixed | -34% ↓ | Mixed sentiment is a warning flag — the narrative around your brand needs attention |

Average Position (currently 11.4) appears as a separate trend chart below the overview cards. It tracks how early in the response your brand appears — lower is better.

How to interpret these numbers

Brand Visibility at 4.0% with strong momentum is encouraging. In this category, the market leader (Semrush) sits at 24.9%. For a newer product, 4.0% with a +2.3% upward trend means AI models are actively discovering and referencing your content. If your BV is below 0.5% and flat, that is a foundational problem — the AI models don’t have enough quality content about your brand to draw from.

Citation Rate growing alongside Brand Visibility is a healthy signal. It means AI engines are not just mentioning Superlines in text but actively linking to its pages — and doing so more often over time. To maintain this trajectory, find which pages are being cited (Step 4 covers this) and keep them updated with authoritative, current content.

Sentiment at 30% mixed is the most urgent concern. A declining sentiment trend means AI engines are describing your brand in neutral or unfavorable terms more frequently. This often happens when competitor content becomes more prominent in AI training data, shifting the narrative. The response is to publish proactive positioning content — comparison pages with honest pros and cons, customer case studies, and third-party reviews that reinforce the narrative you want AI models to learn.

Example: What to conclude from this assessment

Looking at the Superlines data, the assessment is:

“Our brand visibility is growing strongly (+2.3% BV) and AI models are increasingly citing our pages (+3.9% CR). However, sentiment has dropped to 30% mixed, signaling that the narrative AI engines use when discussing us needs attention. Priority: publish proactive positioning content to improve sentiment while continuing to strengthen the pages driving our citation growth.”

That is a specific, actionable conclusion — not a vague “we need to do better.”

Step 2: Find which AI platforms matter for your brand

Goal: Stop trying to optimize everywhere at once. Focus where the opportunity is largest.

Every AI engine pulls from different training data and has different citation patterns. Your brand visibility will vary wildly across platforms. Here is what Superlines’ platform breakdown looks like:

| Platform | Brand Visibility | What this means |

|---|---|---|

| Google AI Mode | 10.5% | Strongest presence — Google’s AI features are citing Superlines regularly |

| Copilot | 10.0% | Nearly as strong — Copilot draws from Bing’s index and is actively citing |

| Grok | 7.2% | Solid presence — Grok continues to cite Superlines content |

| Google AI Overviews | 2.1% | Moderate presence, drawn from indexed pages |

| Perplexity | 1.0% | Low but present — Perplexity cites recent, structured content |

| Gemini | 0.4% | Near-invisible |

| ChatGPT | 0.1% | Effectively invisible |

| Claude, DeepSeek, Mistral | 0.0% | Not mentioned at all |

How to use this data

Do not try to fix every platform. Pick one or two based on strategic importance:

-

Protect where you are strong. Superlines has 10.5% visibility on Google AI Mode and 10.0% on Copilot. Find the prompts and content driving that visibility and keep them updated. Losing an existing position is harder to recover from than gaining a new one.

-

Attack where the audience is. ChatGPT handles the largest share of AI search queries, and Superlines is at 0.1%. That is the biggest opportunity. But improving ChatGPT visibility requires a specific approach: ChatGPT tends to cite high-authority roundup articles, comparison pages, and well-structured content on high-authority domains.

-

Match platform to content type. Perplexity cites recent content with clear headings and direct answers. Google AI Mode and AI Overviews pull heavily from Google’s own index. Copilot draws from Bing. Each platform responds to different content signals, so your strategy for each should differ.

Example: Choosing platform priorities

Based on the Superlines data, a reasonable prioritization would be:

Priority 1: ChatGPT — Largest audience, nearly zero visibility. Requires getting featured in authoritative third-party comparison articles. Priority 2: Perplexity — Active audience of researchers and buyers, and Perplexity rewards fresh, well-structured content that Superlines can publish directly. Priority 3: Protect Google AI Mode, Copilot, and Grok — Already showing strong visibility at 7–10%, ensure these don’t decay.

Step 3: Discover what AI models are actually searching for

Goal: Find the hidden queries that AI engines generate when answering user questions, so you can create content that appears in those searches.

This is the most technically unique and underused capability in Superlines. When a user asks an AI engine a question, the AI doesn’t just answer from memory. Modern AI systems perform real-time web searches to ground their responses. They generate their own internal search queries — called fan-out queries — to find current information before composing an answer.

The Fan-Out Queries section in Superlines shows you exactly what those AI-generated search queries are, which URLs are winning citations for each one, and whether your site appears in the results.

Example: Reading fan-out query data

Here is a sample of fan-out queries from Superlines’ tracked prompts:

| Fan-out query | Occurrences | Volume | Top URL | Your Top URL | Brands |

|---|---|---|---|---|---|

| ChatGPT vs competitors | 30 | 1,200 | Visual Capitalist | — | 5 |

| alternative to Morningscore… | 30 | 480 | Sell.ms | superlines.io | 3 |

| features pricing | 20 | 320 | AUQ.io | — | 4 |

| rank tracking | 26 | 2,100 | AIClicks | — | 6 |

| generative engine optimization | 14 | 890 | PR Newswire | — | 2 |

The Occurrences column shows how often the AI generated this particular search query across your tracked prompts. Volume shows the estimated traditional search volume, helping you gauge reach beyond AI. Top URL is the page most frequently cited by AI engines for that query. Your Top URL shows whether any of your pages appeared. Brands indicates how many brands compete for visibility on that query.

What this tells you — and what to do about it

Out of five high-occurrence fan-out queries, Superlines appears in only one. This is the critical insight: if the AI can’t find your content when it searches the web, it can’t cite you. You are blocked at the source.

Each fan-out query where your Top URL column is empty is a content brief. Here is how to translate the data into action:

| Gap | Content action |

|---|---|

| ”ChatGPT vs competitors” — no URL | Publish a comprehensive ChatGPT comparison page or get featured in existing comparison articles on high-authority domains |

| ”rank tracking” — no URL, high volume | Create a page targeting “AI rank tracking tools” with Superlines positioned prominently |

| ”generative engine optimization” — no URL | Publish the definitive guide to GEO (like the article you are reading now) |

| “alternative to Morningscore…” — appearing weakly | Improve the existing comparison page: add structured data, fresher benchmarks, and build backlinks to strengthen your position |

Fan-out queries are the closest thing AI search has to keywords. Treat this table as your primary content calendar input.

Step 4: Identify which competitor content is winning — and why

Goal: Understand specifically which pages the AI models cite most, and reverse-engineer what makes them successful.

The Top Domains & URLs section shows every URL that appears in AI responses to your tracked prompts, ranked by citations. This is competitive intelligence in its purest form.

Example: Competitive citation analysis

Looking at the top-cited pages across all tracked prompts:

| Rank | URL | Citations | Change |

|---|---|---|---|

| 1 | seranking.com/blog/chatgpt-rank-tracking-tools-2026 | 1,343 | NEW |

| 2 | seranking.com/blog/best-ai-visibility-tools | 975 | +98% |

| 3 | rankability.com/blog/best-ai-search-visibility-tracking-tools | 782 | +1,033% |

Three patterns to notice

1. Comparison articles dominate. The top-cited pages are “best tools” roundups on third-party domains. AI engines, when asked about a category, reach for comprehensive comparison content. If you are not featured in these articles, you are missing the highest-value citation opportunities.

2. Freshness gets rewarded fast. The Rankability page grew +1,033% in citations — meaning someone recently published or updated a comparison article and AI engines immediately started citing it heavily. AI search rewards recency more aggressively than traditional search.

3. Content quality and structure can beat domain size. Rankability is outperforming many larger sites because its content is specifically structured for AI citability — clear headings, direct answers, comprehensive coverage. The platform shows you citation counts and growth rates, so you can identify which content formats are working regardless of the domain behind them.

Turn this into action

For your own URLs: Filter to “My URLs” and list every page currently being cited. These are your highest-value content assets. Protect them like you would a page ranking #1 in Google — keep them updated, expand them, and ensure they contain the most current information.

For competitor URLs: For each fast-growing competitor URL, analyze what makes it successful:

- Is it a comparison/roundup article? Create or get featured in equivalent content.

- Is it freshly published? Update your own competing pages with newer data.

- Does it have a clear, structured format? Match or exceed that structure.

If you have the AEO Agent set up, this is where Phase 2 (Competitive Deep Dive) comes in — the agent’s Researcher automatically scrapes the top competitor URLs, analyzes their content structure, and identifies what makes them effective for AI citations.

Step 5: Find your highest-leverage opportunities

Goal: Instead of guessing what to work on, use data to identify the specific prompts and topics where effort will produce the biggest return.

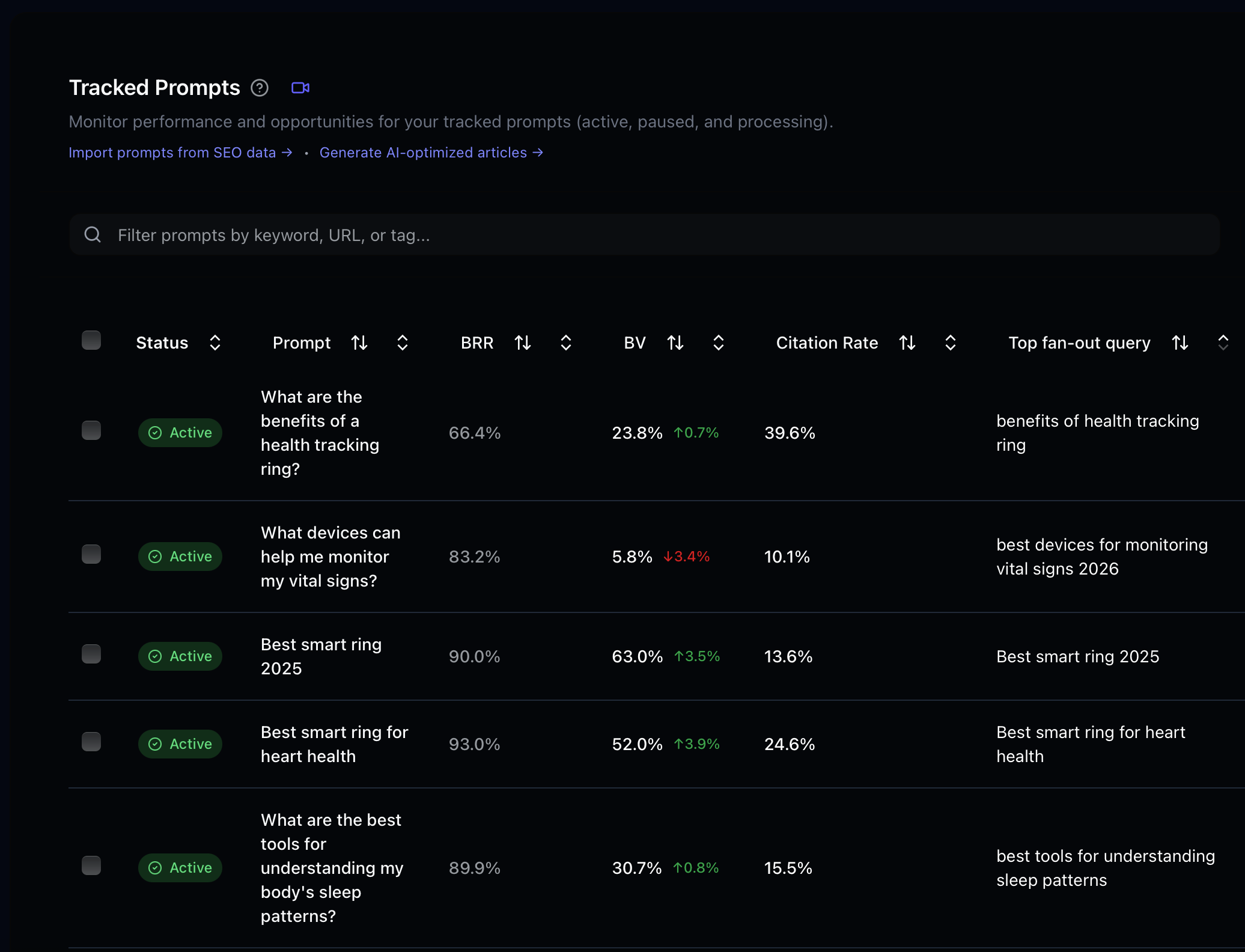

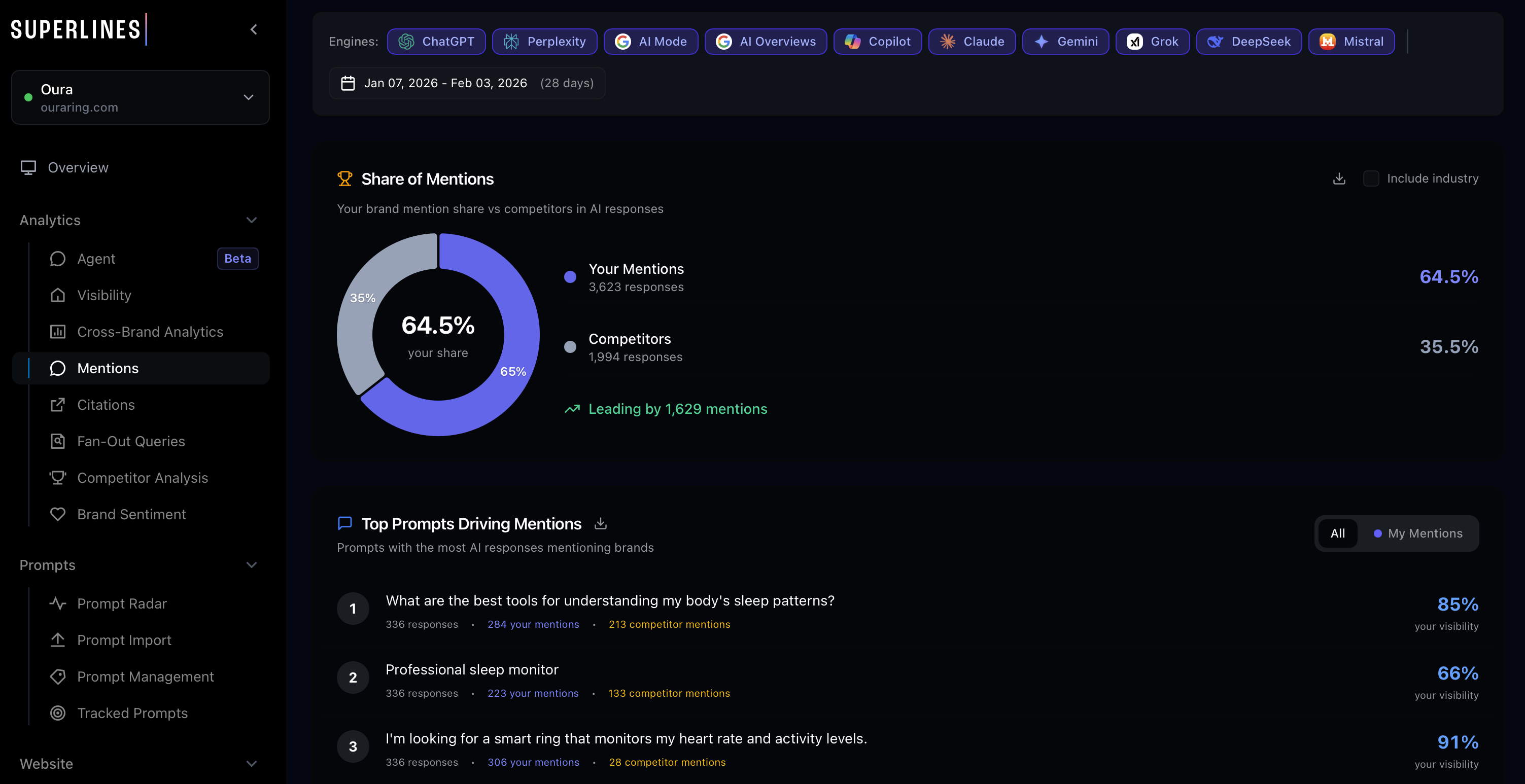

Superlines surfaces opportunities through two key sections: the Citations Gap (under Analytics → Citations) and the Tracked Prompts table (under the Prompts tab). Together, these give you a systematic view of where you are losing to competitors and where new content will have the biggest impact.

Citations Gap — Where competitors win and you don’t

The Citations Gap section (Analytics → Citations) shows the specific URLs where competitors are earning AI citations that you are not. This is your most direct competitive intelligence.

Example: The Citations Gap shows 50 opportunities where competitor URLs are cited in AI responses to your tracked prompts, but your site is not.

Each gap is a concrete content brief. For each competitor URL winning citations, analyze what makes it successful — is it a comparison article, a how-to guide, a data-driven report? — and create equivalent or better content on your own domain.

Tracked Prompts — Per-prompt visibility analysis

The Tracked Prompts table shows per-prompt metrics including your Brand Visibility, Citation Rate, and which competitors appear for each prompt. Use this to identify four types of opportunities:

Visibility gaps: Prompts where your Brand Visibility is 0% but competitors are present. These are category-defining queries where you need to build authority. Prioritize prompts with buying intent — queries like “alternative to [competitor]” or “best tools for [your category]” indicate users in active evaluation mode.

Competitor dominance: Prompts where a single competitor has high visibility (50%+) and you have little or none. A large gap on a category-defining prompt means you need content that AI engines recognize as authoritative on that exact topic.

Existing citations to protect: Prompts where you already have a presence. Don’t create something new — protect what you have. Update cited pages regularly, add fresh data, and build additional backlinks. Decay is the biggest risk for existing citation positions.

High-volume gaps: Cross-reference prompt data with fan-out query volumes (Step 3) to find high-traffic queries where you have no content. These are confirmed content gaps where a targeted, well-structured page can earn a citation position.

Prioritizing opportunities

Not all opportunities are equal. Use this framework:

| Priority | Signal | Action timeline |

|---|---|---|

| Highest | You already have citations — protect them | This week: update and strengthen the page |

| High | Competitor dominates a category-defining prompt | This week: start a content brief |

| Medium | Visibility gap on buying-intent prompt | Next 2 weeks: create comparison content |

| Lower | High-volume gap on informational prompt | Add to monthly content calendar |

Step 6: Create content that AI engines actually cite

Goal: Learn the specific content patterns that make AI models more likely to mention and link to your pages.

AI engines don’t cite content randomly. They follow patterns, and understanding those patterns is the difference between content that gets referenced and content that gets ignored.

Content structure rules for AI citability

Based on analyzing hundreds of top-cited pages across AI platforms, here are the patterns that work:

1. Lead with a direct answer. AI engines are looking for a clear answer to synthesize. If your page buries the answer under 500 words of introduction, the AI will find a competitor page that answers directly.

# What Is Generative Engine Optimization?

Generative Engine Optimization (GEO) is the process of optimizing

your brand's presence in AI-generated search results from ChatGPT,

Gemini, Perplexity, and similar platforms. Unlike traditional SEO

which targets search engine rankings, GEO focuses on getting

mentioned, cited, and recommended in AI-synthesized responses.The first paragraph answers the question. Everything after that expands on the answer.

2. Use headings that match search queries. AI models search the web using natural language queries (the fan-out queries from Step 3). Your H2 and H3 headings should match those queries directly.

## How does AI search visibility differ from traditional SEO?

## Which AI platforms are most important for brand visibility?

## How to track your brand mentions in ChatGPT and GeminiEach heading is a question that an AI model might search for. When the heading matches the query, the AI is more likely to cite the content beneath it.

3. Include external data and statistics. AI engines view pages with external data citations as more authoritative. Include at least 3 statistics from third-party sources per article.

According to a 2025 Gartner study, 65% of B2B buyers now use

AI search tools during their evaluation process

([source](https://www.gartner.com/...)).Pages that cite data are cited more than pages that make unsupported claims.

4. Structure comparisons as tables. When AI models need to compare options, they look for structured data. Tables are easier for AI to parse and reference than paragraph text.

| Tool | AI Platforms Tracked | Citation Tracking | Starting Price |

|------|---------------------|-------------------|----------------|

| Superlines | 10+ (ChatGPT, Gemini, etc.) | Yes | €89/mo |

| Competitor A | 5 | Limited | $149/mo |

| Competitor B | 3 | No | $99/mo |5. Add FAQ sections. FAQ content maps directly to the question-answer format that AI engines use. Aim for 5 questions per article, each answered in 2-3 sentences.

How the AEO Agent automates content creation

If you’re creating or updating content manually, the rules above will guide you. But if you want to scale the process, the Superlines AEO Agent automates the entire content intelligence and writing workflow.

The agent runs a 7-phase pipeline that maps directly to the steps in this guide:

Step in this guide AEO Agent phase

───────────────────── ──────────────────────────────

Step 1: Assess position → Phase 1: Intelligence Gathering

Step 4: Analyze competitors → Phase 2: Competitive Deep Dive

Protect existing content → Phase 3: Content Health Audit

Keep data accurate → Phase 4: Fact-Check

Find new angles → Phase 5: Industry Insights

Embed data in content → Phase 6: Data Storytelling

Create + optimize pages → Phase 7: Content ActionsHere is what each phase does in practice:

Phase 1 — Intelligence Gathering. The Analyst agent calls Superlines’ analytics tools to pull brand visibility metrics, citation rates, competitive gaps, and content opportunities. This is the automated version of Steps 1-3 in this guide.

Phase 2 — Competitive Deep Dive. The Researcher agent scrapes the top competitor URLs that are winning AI citations (the same URLs you found in Step 4) and analyzes their content structure, topics, and data citations.

Phase 3 — Content Health Audit. The Content Manager inventories all published articles and flags any that are older than 6 months, contain outdated year references, or are missing key structural elements.

Phase 4 — Fact-Check. The agent extracts every claim in your content — pricing, statistics, feature counts, dates — and verifies them against live sources. Outdated claims are flagged for correction.

Phase 5 — Industry Insights. The Researcher searches for trending topics in AI search optimization, GEO, and AEO across the web and Reddit, identifying new content ideas and data points to embed in articles.

Phase 6 — Data Storytelling. The Analyst mines your Superlines analytics data for compelling insights — performance by LLM platform, sentiment trends, brand mention patterns — that can be embedded directly into articles as proprietary data.

Phase 7 — Content Actions. Based on everything gathered in phases 1-6, the Content Manager creates new article drafts, updates outdated content, and fixes incorrect facts in your CMS. All new content is created as a draft for human review — the agent never auto-publishes.

The agent runs daily, producing a report and CMS updates each time. Over weeks, this creates a compounding effect: every content gap gets filled, every outdated fact gets corrected, and every new competitive signal gets acted on.

To set up the agent, follow either:

- Beginner setup guide — for non-technical users, walks through every click

- Advanced build guide — for developers who want to build it from scratch

Step 7: Monitor mentions to understand your brand narrative

Goal: Know exactly how AI engines describe your brand, and correct the narrative when it drifts.

The Latest Brand Mentions section shows the actual prompts where an AI engine mentioned your brand, which engine generated the mention, how the mention was sourced, its current status, and when it was last tested.

Example: Reading the mention context

Recent Superlines mentions:

| Engine | Prompt | Source | Status | Tested |

|---|---|---|---|---|

| Copilot | ”best ai mode rank tracker tool” | Prompt Radar | Active | Mar 8, 2026 |

| Copilot | ”ai search visibility tools comparison” | SERP | Cited | Mar 8, 2026 |

| Grok | ”best copilot rank tracking” | Prompt Radar | Mentioned | Mar 7, 2026 |

| Grok | ”alternative to morningscore chatgpt tracker” | SERP | Cited | Mar 7, 2026 |

The Source column tells you whether the mention came from a SERP (search engine results page) analysis or from Prompt Radar, Superlines’ prompt discovery feature. The Status column distinguishes between Active, Cited (linked to your domain), and Mentioned (brand name appears but without a link) — this distinction helps you understand whether AI engines trust you enough to link or merely reference you by name.

What to do with this data

Search for the actual AI response. For each mention, go to that AI platform and ask the same question. Read how your brand is described. The language AI uses reflects the dominant narrative in the content it was trained on. If the description is vague, generic, or positions you as an afterthought, that is a direct signal that your content is not differentiating enough.

Track the Cited vs. Mentioned ratio. If most of your mentions are “Mentioned” rather than “Cited,” AI engines are aware of your brand but not treating your content as authoritative enough to link. The fix is to strengthen the specific pages that align with these prompts — add structured data, deepen the content, and ensure direct answers to the questions being asked.

Use as a weekly pulse check. Compare this week’s mentions to last week’s. New prompts appearing in mentions mean your reach is expanding. Prompts dropping out signal content decay on the pages being cited.

Step 8: Translate visibility into business impact

Goal: Connect AI search metrics to numbers that leadership cares about.

Your dashboard overview already contains the data you need to build a business case. The core metrics — total responses tracked, brand mentions, and citations — form a natural funnel:

| Funnel stage | Value | Rate |

|---|---|---|

| AI Responses Tracked | 5,864 responses analyzed | — |

| Brand Mentions | 234 responses mentioning brand | 4.0% Brand Visibility |

| Domain Citations | 524 responses linking to site | 8.9% Citation Rate |

That 8.9% citation rate means nearly 1 in 10 AI responses to your tracked prompts links directly to your domain. This traffic carries high intent because users are actively researching — and AI-driven referrals convert at a meaningfully higher rate than average web traffic.

For deeper traffic analytics, Superlines offers the GA4 AI Traffic section (under Website → GA4 AI Traffic in the sidebar), which connects to your Google Analytics 4 account to track actual LLM referral traffic — showing you exactly how many visitors arrive at your site from AI engines and what they do after landing.

How to use this in reporting

When presenting to stakeholders, frame the metrics as a funnel:

“Our brand appears in 234 AI-generated responses per month (4.0% Brand Visibility) and is cited with a link in 524 (8.9% Citation Rate). Both metrics are trending upward. Based on our content roadmap, we project improving brand visibility from 4.0% to 6-8% within 60 days, which would roughly double our brand mentions.”

This is a concrete business metric that ties content work to measurable outcomes.

A weekly GEO workflow

Once you understand the data and have a content process in place, GEO becomes a weekly routine. Here is a practical 30-minute schedule:

Monday: Health check (10 minutes)

- Check Brand Visibility, Citation Rate, and Sentiment in the Overview

- Note any metric that moved more than ±1% week-over-week

- If sentiment dropped: schedule a content response to reinforce your positioning

- If a platform dropped: check which prompts are tracked for that platform

Tuesday: Opportunity review (10 minutes)

- Review the Citations Gap section (Analytics → Citations) for new competitor URLs winning citations you are not

- Check Tracked Prompts for prompts where competitors dominate and you have low or zero visibility

- If using the AEO Agent: review its latest report for automatically surfaced opportunities

Wednesday: Mention scan (5 minutes)

- Review the Latest Brand Mentions section for new prompts where AI engines mentioned your brand

- Search for 2-3 of the actual AI responses and read the language used

- Flag any mischaracterization of your brand for a content correction

Friday: Fan-out query gap check (5 minutes)

- Look at the top 10 fan-out queries by occurrences

- For any query gaining occurrences where your Top URL column is empty, add it to your content planning as a confirmed gap

- For any query where you appear but competitors dominate the Top URL, add it to your optimization queue

The compounding effect

AI search visibility is not a one-time optimization. It compounds. Every article you publish, every comparison page you update, every third-party mention you earn makes it more likely that AI models will mention and cite you in the future.

The Superlines dashboard gives you the data to see exactly where trust is building and where it is absent. Fan-out queries tell you what the AI is searching for. Citation URLs tell you what it trusts. The Citations Gap and Tracked Prompts tell you where the biggest gaps are. And trend charts tell you whether your work is moving the needle.

The AEO Agent, if you choose to run it, turns this from a weekly manual process into a daily automated one — surfacing gaps, verifying facts, and producing content drafts that address the exact opportunities your data reveals.

Start with one action: open your dashboard, run through the steps above, and pick a single content brief. That first brief — written, published, and tracked — is how AI search visibility compounds over time.

What to read next

- Setup Guide: Superlines MCP and AEO Agent for Non-Technical Users — Get the agent running in 30 minutes

- Build a GEO + SEO Marketing Agent in Claude Desktop — Combine AI search and traditional SEO analysis

- Build an Agentic AEO Content Pipeline — The full developer guide to the 7-phase agent